The impact factor (IF) or journal impact factor (JIF) of an academic journal is a scientometric index calculated by Clarivate that reflects the yearly mean number of citations of articles published in the last two years in a given journal, as indexed by Clarivate's Web of Science.

Bibliometrics is the application of statistical methods to the study of bibliographic data, especially in scientific and library and information science contexts, and is closely associated with scientometrics to the point that both fields largely overlap.

Scientometrics is a subfield of informetrics that studies quantitative aspects of scholarly literature. Major research issues include the measurement of the impact of research papers and academic journals, the understanding of scientific citations, and the use of such measurements in policy and management contexts. In practice there is a significant overlap between scientometrics and other scientific fields such as information systems, information science, science of science policy, sociology of science, and metascience. Critics have argued that overreliance on scientometrics has created a system of perverse incentives, producing a publish or perish environment that leads to low-quality research.

Citation analysis is the examination of the frequency, patterns, and graphs of citations in documents. It uses the directed graph of citations — links from one document to another document — to reveal properties of the documents. A typical aim would be to identify the most important documents in a collection. A classic example is that of the citations between academic articles and books. For another example, judges of law support their judgements by referring back to judgements made in earlier cases. An additional example is provided by patents which contain prior art, citation of earlier patents relevant to the current claim. The digitization of patent data and increasing computing power have led to a community of practice that uses these citation data to measure innovation attributes, trace knowledge flows, and map innovation networks.

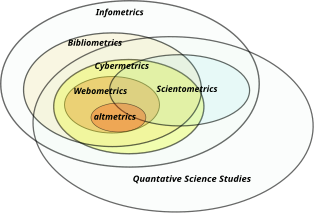

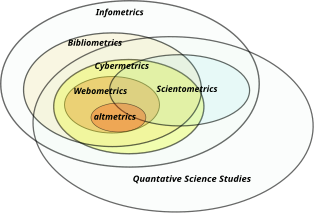

Informetrics is the study of quantitative aspects of information, it is an extension and evolution of traditional bibliometrics and scientometrics. Informetrics uses bibliometrics and scientometrics methods to study mainly the problems of literature information management and evaluation of science and technology. Informetrics is an independent discipline that uses quantitative methods from mathematics and statistics to study the process, phenomena, and law of informetrics. Informetrics has gained more attention as it is a common scientific method for academic evaluation, research hotspots in discipline, and trend analysis.

Citation impact or citation rate is a measure of how many times an academic journal article or book or author is cited by other articles, books or authors. Citation counts are interpreted as measures of the impact or influence of academic work and have given rise to the field of bibliometrics or scientometrics, specializing in the study of patterns of academic impact through citation analysis. The importance of journals can be measured by the average citation rate, the ratio of number of citations to number articles published within a given time period and in a given index, such as the journal impact factor or the citescore. It is used by academic institutions in decisions about academic tenure, promotion and hiring, and hence also used by authors in deciding which journal to publish in. Citation-like measures are also used in other fields that do ranking, such as Google's PageRank algorithm, software metrics, college and university rankings, and business performance indicators.

The h-index is an author-level metric that measures both the productivity and citation impact of the publications, initially used for an individual scientist or scholar. The h-index correlates with success indicators such as winning the Nobel Prize, being accepted for research fellowships and holding positions at top universities. The index is based on the set of the scientist's most cited papers and the number of citations that they have received in other publications. The index has more recently been applied to the productivity and impact of a scholarly journal as well as a group of scientists, such as a department or university or country. The index was suggested in 2005 by Jorge E. Hirsch, a physicist at UC San Diego, as a tool for determining theoretical physicists' relative quality and is sometimes called the Hirsch index or Hirsch number.

Journal Citation Reports (JCR) is an annual publication by Clarivate. It has been integrated with the Web of Science and is accessed from the Web of Science Core Collection. It provides information about academic journals in the natural and social sciences, including impact factors. JCR was originally published as a part of the Science Citation Index. Currently, the JCR, as a distinct service, is based on citations compiled from the Science Citation Index Expanded and the Social Sciences Citation Index. As of the 2023 edition, journals from the Arts and Humanities Citation Index and the Emerging Sources Citation Index have also been included.

Journal ranking is widely used in academic circles in the evaluation of an academic journal's impact and quality. Journal rankings are intended to reflect the place of a journal within its field, the relative difficulty of being published in that journal, and the prestige associated with it. They have been introduced as official research evaluation tools in several countries.

An academic discipline or academic field is a subdivision of knowledge that is taught and researched at the college or university level. Disciplines are defined and recognized by the academic journals in which research is published, and the learned societies and academic departments or faculties within colleges and universities to which their practitioners belong. Academic disciplines are conventionally divided into the humanities, the scientific disciplines, the formal sciences like mathematics and computer science, the social sciences are sometimes considered a four category.

A bibliometrician is a researcher or a specialist in bibliometrics. It is near-synonymous with an informetrican, a scientometrican and a webometrician, who study webometrics.

In scholarly and scientific publishing, altmetrics are non-traditional bibliometrics proposed as an alternative or complement to more traditional citation impact metrics, such as impact factor and h-index. The term altmetrics was proposed in 2010, as a generalization of article level metrics, and has its roots in the #altmetrics hashtag. Although altmetrics are often thought of as metrics about articles, they can be applied to people, journals, books, data sets, presentations, videos, source code repositories, web pages, etc.

The San Francisco Declaration on Research Assessment (DORA) is a statement that denounces the practice of correlating the journal impact factor to the merits of a specific scientist's contributions. Also according to this statement, this practice creates biases and inaccuracies when appraising scientific research. It also states that the impact factor is not to be used as a substitute "measure of the quality of individual research articles, or in hiring, promotion, or funding decisions".

Article-level metrics are citation metrics which measure the usage and impact of individual scholarly articles.

The CWTS Leiden Ranking is an annual global university ranking based exclusively on bibliometric indicators. The rankings are compiled by the Centre for Science and Technology Studies at Leiden University in the Netherlands. The Clarivate Analytics bibliographic database Web of Science is used as the source of the publication and citation data.

The University Ranking by Academic Performance (URAP) is a university ranking developed by the Informatics Institute of Middle East Technical University. Since 2010, it has been publishing annual national and global college and university rankings for top 2000 institutions. The scientometrics measurement of URAP is based on data obtained from the Institute for Scientific Information via Web of Science and inCites. For global rankings, URAP employs indicators of research performance including the number of articles, citation, total documents, article impact total, citation impact total, and international collaboration. In addition to global rankings, URAP publishes regional rankings for universities in Turkey using additional indicators such as the number of students and faculty members obtained from Center of Measuring, Selection and Placement ÖSYM.

Author-level metrics are citation metrics that measure the bibliometric impact of individual authors, researchers, academics, and scholars. Many metrics have been developed that take into account varying numbers of factors.

There are a number of approaches to ranking academic publishing groups and publishers. Rankings rely on subjective impressions by the scholarly community, on analyses of prize winners of scientific associations, discipline, a publisher's reputation, and its impact factor.

Ronald Rousseau is a Belgian mathematician and information scientist. He has obtained an international reputation for his research on indicators and citation analysis in the fields of bibliometrics and scientometrics.

The open science movement has expanded the uses scientific output beyond specialized academic circles.