Computer vision tasks include methods for acquiring, processing, analyzing and understanding digital images, and extraction of high-dimensional data from the real world in order to produce numerical or symbolic information, e.g. in the forms of decisions. Understanding in this context means the transformation of visual images into descriptions of the world that make sense to thought processes and can elicit appropriate action. This image understanding can be seen as the disentangling of symbolic information from image data using models constructed with the aid of geometry, physics, statistics, and learning theory.

AIBO is a series of robotic dogs designed and manufactured by Sony. Sony announced a prototype Aibo in mid-1998, and the first consumer model was introduced on 11 May 1999. New models were released every year until 2006. Although most models were dogs, other inspirations included lion cubs, huskies, Jack Russell terriers, bull terrier, and space explorers. Only the ERS-7, ERS-110/111 and ERS-1000 versions were explicitly a "robotic dog", but the 210 can also be considered a dog due to its Jack Russell Terrier appearance and face. In 2006, AIBO was added into the Carnegie Mellon University Robot Hall of Fame.

Machine vision (MV) is the technology and methods used to provide imaging-based automatic inspection and analysis for such applications as automatic inspection, process control, and robot guidance, usually in industry. Machine vision refers to many technologies, software and hardware products, integrated systems, actions, methods and expertise. Machine vision as a systems engineering discipline can be considered distinct from computer vision, a form of computer science. It attempts to integrate existing technologies in new ways and apply them to solve real world problems. The term is the prevalent one for these functions in industrial automation environments but is also used for these functions in other environment vehicle guidance.

The Norwegian Institute of Technology was a science institute in Trondheim, Norway. It was established in 1910, and existed as an independent technical university for 58 years, after which it was merged into the University of Trondheim as an independent college.

FANUC is a Japanese group of companies that provide automation products and services such as robotics and computer numerical control wireless systems. These companies are principally FANUC Corporation of Japan, Fanuc America Corporation of Rochester Hills, Michigan, USA, and FANUC Europe Corporation S.A. of Luxembourg.

3D scanning is the process of analyzing a real-world object or environment to collect three dimensional data of its shape and possibly its appearance. The collected data can then be used to construct digital 3D models.

The following outline is provided as an overview of and topical guide to computer vision:

OmniVision Technologies Inc. is an American subsidiary of Chinese semiconductor device and mixed-signal integrated circuit design house Will Semiconductor. The company designs and develops digital imaging products for use in mobile phones, laptops, netbooks and webcams, security and surveillance cameras, entertainment, automotive and medical imaging systems. Headquartered in Santa Clara, California, OmniVision Technologies has offices in the US, Western Europe and Asia.

Microsoft PixelSense was an interactive surface computing platform that allowed one or more people to use and touch real-world objects, and share digital content at the same time. The PixelSense platform consists of software and hardware products that combine vision based multitouch PC hardware, 360-degree multiuser application design, and Windows software to create a natural user interface (NUI).

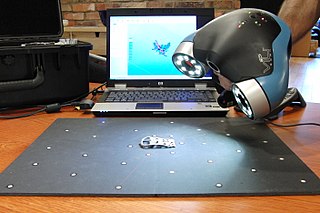

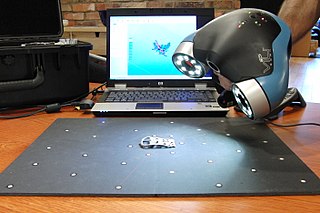

A structured-light 3D scanner is a 3D scanning device for measuring the three-dimensional shape of an object using projected light patterns and a camera system.

A vision-guided robot (VGR) system is basically a robot fitted with one or more cameras used as sensors to provide a secondary feedback signal to the robot controller to more accurately move to a variable target position. VGR is rapidly transforming production processes by enabling robots to be highly adaptable and more easily implemented, while dramatically reducing the cost and complexity of fixed tooling previously associated with the design and set up of robotic cells, whether for material handling, automated assembly, agricultural applications, life sciences, and more.

GenICam is a generic programming interface for machine vision (industrial) cameras. The goal of the standard is to decouple industrial camera interfaces technology from the user application programming interface (API). GenICam is administered by the European Machine Vision Association (EMVA). The work on the standard began in 2003 and the first module in GenICam, i.e., GenApi, was ratified in 2006 whereas the final module, i.e., GenTL was ratified in 2008.

A time-of-flight camera, also known as time-of-flight sensor, is a range imaging camera system for measuring distances between the camera and the subject for each point of the image based on time-of-flight, the round trip time of an artificial light signal, as provided by a laser or an LED. Laser-based time-of-flight cameras are part of a broader class of scannerless LIDAR, in which the entire scene is captured with each laser pulse, as opposed to point-by-point with a laser beam such as in scanning LIDAR systems. Time-of-flight camera products for civil applications began to emerge around 2000, as the semiconductor processes allowed the production of components fast enough for such devices. The systems cover ranges of a few centimeters up to several kilometers.

PrimeSense was an Israeli 3D sensing company based in Tel Aviv. PrimeSense had offices in Israel, North America, Japan, Singapore, Korea, China and Taiwan. PrimeSense was bought by Apple Inc. for $360 million on November 24, 2013.

Intel RealSense Technology, formerly known as Intel Perceptual Computing, is a product range of depth and tracking technologies designed to give machines and devices depth perception capabilities. The technologies, owned by Intel are used in autonomous drones, robots, AR/VR, smart home devices amongst many others broad market products.

PMD Technologies is a developer of CMOS semiconductor 3D time-of-flight (ToF) components and a provider of engineering support in the field of digital 3D imaging. The company is named after the Photonic Mixer Device (PMD) technology used in its products to detect 3D data in real time. The corporate headquarters of the company is located in Siegen, Germany.

Visage SDK is a multi-platform software development kit (SDK) created by Visage Technologies AB. Visage SDK allows software programmers to build facial motion capture and eye tracking applications.

Artec 3D is a developer and manufacturer of 3D scanning hardware and software. The company is headquartered in Luxembourg, with offices also in the United States, China (Shanghai) and Montenegro (Bar). Artec 3D's products and services are used in various industries, including engineering, healthcare, media and design, entertainment, education, fashion and historic preservation. In 2013, Artec 3D launched an automated full-body 3D scanning system, Shapify.me, that creates 3D portraits called “Shapies.”

The Aphelion Imaging Software Suite is a software suite that includes three base products - Aphelion Lab, Aphelion Dev, and Aphelion SDK for addressing image processing and image analysis applications. The suite also includes a set of extension programs to implement specific vertical applications that benefit from imaging techniques.

Mech-Mind Robotics, simply as Mech-Mind, is an AI+3D robotics company founded by Tianlan Shao in 2016. The company focuses on industrial 3D cameras and software suites for robotic applications. Its main products include Mech-Eye series, Mech-Vision, Mech-DLK, and Mech-Viz, which can be used in the logistics, automotive, home appliance, and steel industries.